Primary, Secondary, and the Data Stream Between

An industry debate caught my attention recently — the kind that swirls through professional inboxes and LinkedIn threads with a mix of genuine methodology questions and, let's be honest, some industry anxiety. The spark? A major AI tech company published an xlarge-scale research report and referred to the data as qualitative. Naturally, researchers, especially quallies, had thoughts. Including myself.

The debate raised real methodological questions (sample composition! rigor! transparency!). But the thread that kept pulling at me was more foundational: before we can argue about whether something is "qualitative," we probably need to agree on whether it's even primary research in the first place.

Quick footnote: I spend a whole lesson on this with my undergrad marketing research students. And based on what I've been seeing lately, maybe it's worth a refresher for all of us. 🤓

Primary Research: Designed for Your Questions

Primary research is data you collect for a specific purpose. You (or someone you hire) designs the study, determines the methodology, defines the sample, and collects new information to answer specific research questions. There's a direct link between the study objectives and the study outcomes. The research should tell a cohesive story.

Focus groups. In-depth interviews. Surveys you fielded. Observational research you conducted. AI-moderated interviews you commissioned. All primary — because the data didn't exist before someone went out to get it, for a defined purpose.

The defining characteristic: the data was designed for your questions. I sometimes refer to primary as "first person" to help my students understand. Is this MY data? MY research? MY data inquiry? Or is it someone else's?

Secondary Research: Someone Else's Primary

Secondary research is data that already exists — published, accessible, and typically created for someone else's purposes. Industry reports. Government data. Academic papers. White papers from other companies. Blog posts, news articles, magazine op-eds — all secondary for the reader/reviewer.

The lens to apply here: who was this study designed for, and why? That context shapes what the data can and can't tell you about your own specific situation.

Secondary research is incredibly valuable — it's how we contextualize findings, spot trends, and come to the primary research design phase better informed. But it doesn't replace primary research, and it was never meant to. It's a supporting function in the data ecosystem, not an all-encompassing solution.

Which brings me back to that industry debate. The report in question was primary research for the company that conducted it. The moment it was published, it became secondary research for everyone else. Apply the "who was this for, and why" lens, and a lot of the anxiety around findings like these becomes more manageable. And furthermore, even publishing the data was for more their sake than ours, we’re simply observers to their research process, not stakeholders. This AI company did not owe it to us to publish their findings and their work…(and I'm sure their PR team is not mad about the discourse!)

The Classifications Debate

Now, I wholeheartedly agree with several of my industry colleagues that the term "qualitative" gets thrown around a little too loosely. Ask a practitioner what "qualitative" means and ask an academic the same question — you'll get two very different answers. I know because I've been both. Eighteen years in the industry, five years teaching undergrads, and most MR courses treat quant as the superhero and qual as the creative sidekick.

I can reasonably say there is a definitive difference in the approach to what "qualitative" means to the two camps of researchers. Practitioners consider qual a methodology — it involves probe work, depth, iteration. Academics and quant researchers consider any open-ended data to be "qualitative," as it relates to free response, verbatim, fluid text-based answers. Neither are wrong; in fact, they're both correct depending on the setting and use case.

I think it's partially why qual gets less higher education emphasis compared to quant and survey work. Most MR classes refer to data in the quant sense, and qual gets a teeny portion of the overall considerations. Quant is more tangible — it's a harder skill, with bigger data sets. But qual is equally, if not more powerful, when applied well.

That lack of formality is both our blessing and curse. We have the freedom to explore in-depth responses but lack a "one way" of doing things, which opens up room for ill-trained researchers to make connections that may not exist. Qual is a soft skill, quant is a hard one. I think all teams need both to be successful.

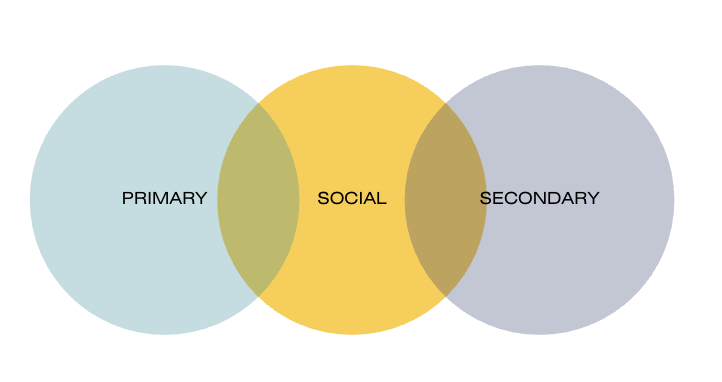

And Then There's Social

Here's where the traditional two-category framework gets genuinely interesting — and where most of my work at The Social Question lives.

Social data doesn't fit neatly into either bucket. (Which is kind of the point.)

Social media conversations, comment sections, community discussions — this data exists in the wild. Real people, real behavior, unscripted. In that sense it looks like secondary: it wasn't designed for your questions, and it already exists.

But when you collect it with intention — pulling it, structuring it, analyzing it against a specific research question — it starts to behave like primary research. You're making methodological choices. You're applying a framework. You're generating insight that didn't exist before you went looking.

That's where tools like ScribeQ come in. ScribeQ was built to pull Instagram IG Stories reply data so researchers can analyze it with the kind of rigor we'd apply to any primary data collection; not just skimming for themes, but actually working through conversational exchanges with a research lens. The raw data is social (it exists out there in the wild, albeit behind an influencer's account access), but the research process is primary. Since we hire our influencers for explicitly stated research functions, the data is primary, the responses are qualitative, yet the volume can edge into quantitative territory once we've hit over 200 responses.

Where any given piece of social data lands on the primary/secondary spectrum depends on how you're collecting it, what research question you're applying it to, and how transparent you're being about the methodology. Context isn't a cop-out; it's the whole framework.

The Practical Takeaway

Whatever data source you're working with (a published industry report, a brand's internal study, a pile of Instagram comments), ask the same questions:

Who collected this, and why? What were their research objectives? How representative is it of my specific question? What am I actually using it for?

In my strongest opinion, you have a professional duty to scan for secondary research and sources before scoping primary work. Knowing what's already out in the world makes your primary research sharper, not redundant. But secondary research will never replace the study you design for your own specific questions. That gap is exactly why primary research exists.

While we may gain access to methodology statements in secondary research, we're never given full access to all of the under-the-hood mechanics and documents, we only know what they want us to know. In other words, we only know what they choose to disclose. You have to proceed with caution if you're planning on making a big decision based on someone else's data. This applies to all decisions, personal and professional, and much of society has lost the plot regarding critical thinking when data is presented with confidence and authority.

Social data, like all data, requires that critical thinking layer. It's not a shortcut. But it's not a second-class citizen either — especially when it's collected and analyzed with intention.

And the next time a major tech company publishes a giant dataset and calls it something that makes your eye twitch? Apply the lens.